The goal of this project is to experiment with transmitting images through the telephone using tone volume (amplitude).

First I created a processing sketch that loops through the pixels of an image and analyzes their brightness. For each pixel it emits a tone. The tone’s amplitude and frequency are determined by the brightness of the pixel, so darker pixels create lower, quieter sounds, and brighter pixels emit louder, higher frequency sounds. The sounds are generated at a rate of 15 frames / 15 pixels per second.

Second, I used the Megaphone client to pick up the amplitude of incoming volume on a telephone call, and at the same rate of 15 pixels per second reassemble the image.

Although my first attempt was very slow, I was really happy with the result.

I was really impressed with the result. It was done through a phone connected to the Megaphone app, that was picking the audio output of the computer.

Next I experimented with some lower resolution images, taking a sampling every 5 or 2 pixels, and these were much less interesting, but were helpful in working more quickly. I also connected the output of processing through SoundFlowerBed to the input of skype, so that I wouldn’t have to listen to the sound, which at this point is pretty annoying.

This was the second attempt, and rendered very quickly(probably a minute). It has lost a lot of information.

This was a 2*2 pixel transmission, that took about 15 minutes. It had also lost some information, and contrast. The audio signal received is mapped to the generated shade of gray (between 0 and 255). Because the range of the received value tends to vary, both based on the output settings, and the connection, I am now trying to figure out a way to have the program learn to adjust its own range based on the current input. For example, if the maximum received value is constantly being hit, it should increase a little, and while it is not being reached it can degrade a slower pace. The same can be applied to the minimum volume received.

In this next one I have created the following learning mechanism:

This seems like it is way too complicated for what I want, but it works, and I'm not sure how to simplify it. The volume range is 0 to 1. But in the Megaphone program neither limit is ever reached except by some random accidents. So, I start both the upper and lower volume limits (minimumVol and maximumVol) at 0.5, and continue to adjust them throughout the transmission to reflect the input.

The result had great color range compared to the last one:

It seems to have worked, and hopefully this means I don't have to adjust the code to every situation, since it can adjust itself.

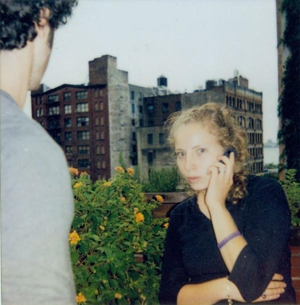

Now for the full resolution that I figured out should take exactly one hour to transmit:

I got cut off at the same moment as the last one, I guess it is a skype or Megaphone time limit. But I think the contrast and color mapping is better.

Next I set up a context in the ITP asterisk to dial Megaphone, and routed computer audio through SoundFlower to Zoiper. Starting the megaphone sketch, and then calling it via zoiper, the fax is transmitted through the ITP asterisk, which cuts me off at random intervals, unfortunately:

But the result is really interesting. I think the black dots are a result of the crackling I heard through Zoiper.

2nd attempt got cut off at the same time so the next step is to have the sketch keep drawing to save its place, so I can call it back.

[wpvideo rtVRAeTK]